ANALYZE for Codebases: Giving Claude Code a Persistent Memory of Your Repo

Claude Code bills per token, and every fresh session starts cold — grepping and re-reading the same files to rediscover the same architecture. I built it a queryable, self-healing knowledge graph and measured 9 real queries. Median savings: 85%. Worst case: 42%. Here's the methodology, the ugly data points, and where it breaks.

For readers who don't use AI coding tools: Claude Code is an AI agent that writes and edits your codebase for you — and it bills per token for every file it reads. When you ask it "where does X happen?", it runs grep, opens several files, reads them into its context, and pays for every line. Your own grep is free. An AI agent's grep is priced in fractions of a cent per thousand tokens, and it adds up. This post is about cutting that specific cost — and, more importantly, about why cold-start amnesia is the real bug in AI-assisted coding.

The Real Problem: Cold-Start Amnesia

Every time I open a new Claude Code session and ask something like "where does document encryption happen in this service?", the same ritual plays out: glob **/*.py, grep encrypt, read six files, realize one is the wrong abstraction layer, read four more, finally answer.

Total cost: 20–40K tokens on a good day, 80K+ when it wandered. Answer quality: fine, eventually. Time: a couple of minutes of watching tool calls scroll by.

The infuriating part is that the architecture didn't change between sessions. Claude was rediscovering the same map every single time, from scratch, because the map doesn't exist anywhere it can read cheaply. This is cold-start amnesia, and it's the load-bearing bug: every lab is racing to scale reasoning, while the actual bottleneck is that each session starts with zero memory of your code's shape.

Databases solved this in the 1970s. Postgres doesn't re-parse your schema on every query — it maintains a cached statistics catalog, invalidates it on DDL, and exposes ANALYZE to refresh drifted stats. Grep-per-session is a full sequential scan. What I wanted was ANALYZE for a codebase: a persistent structural index Claude could query in ~2K tokens instead of grepping from scratch in 40K.

So I built the map once and told Claude where to look.

What I Actually Built

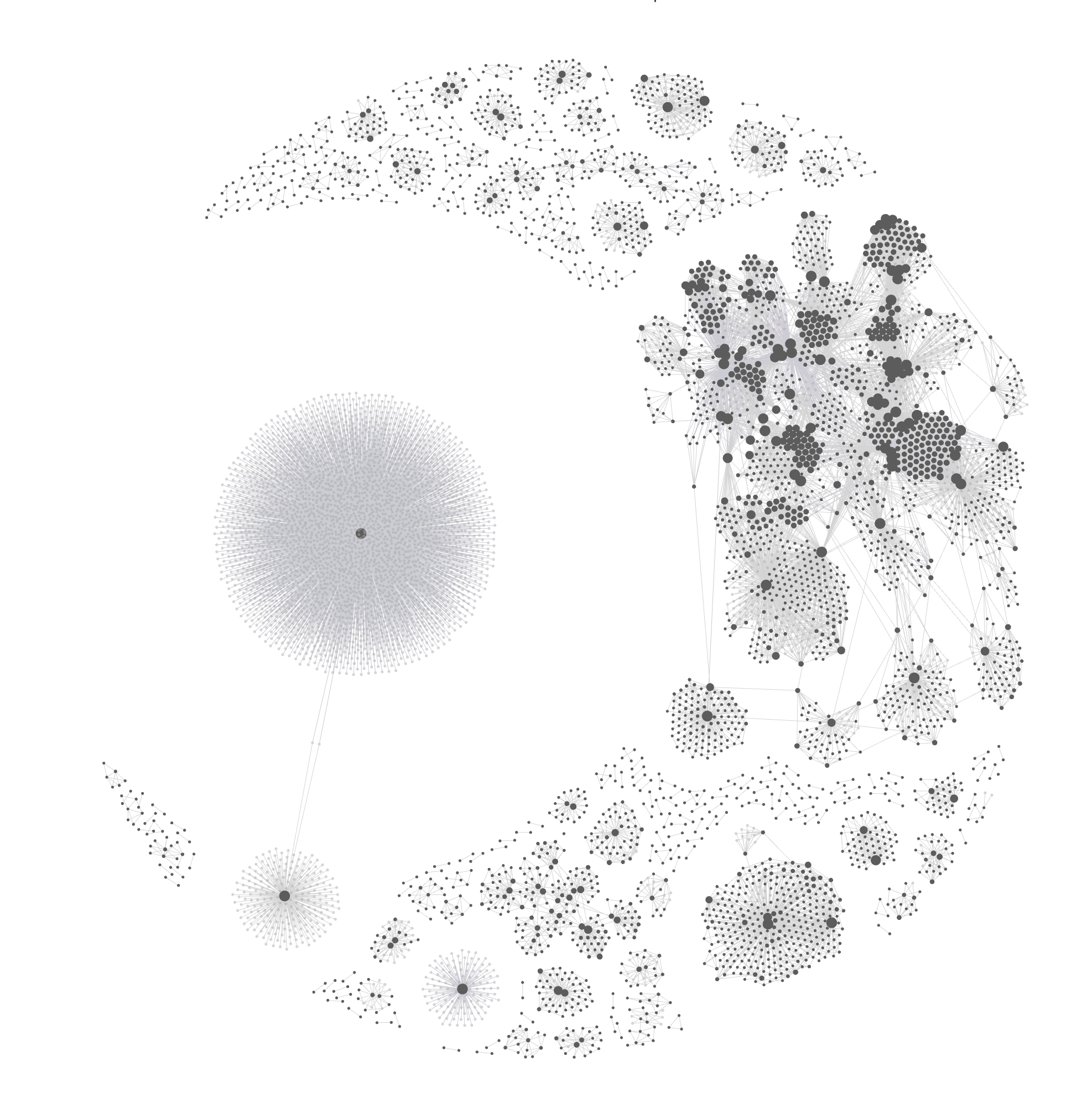

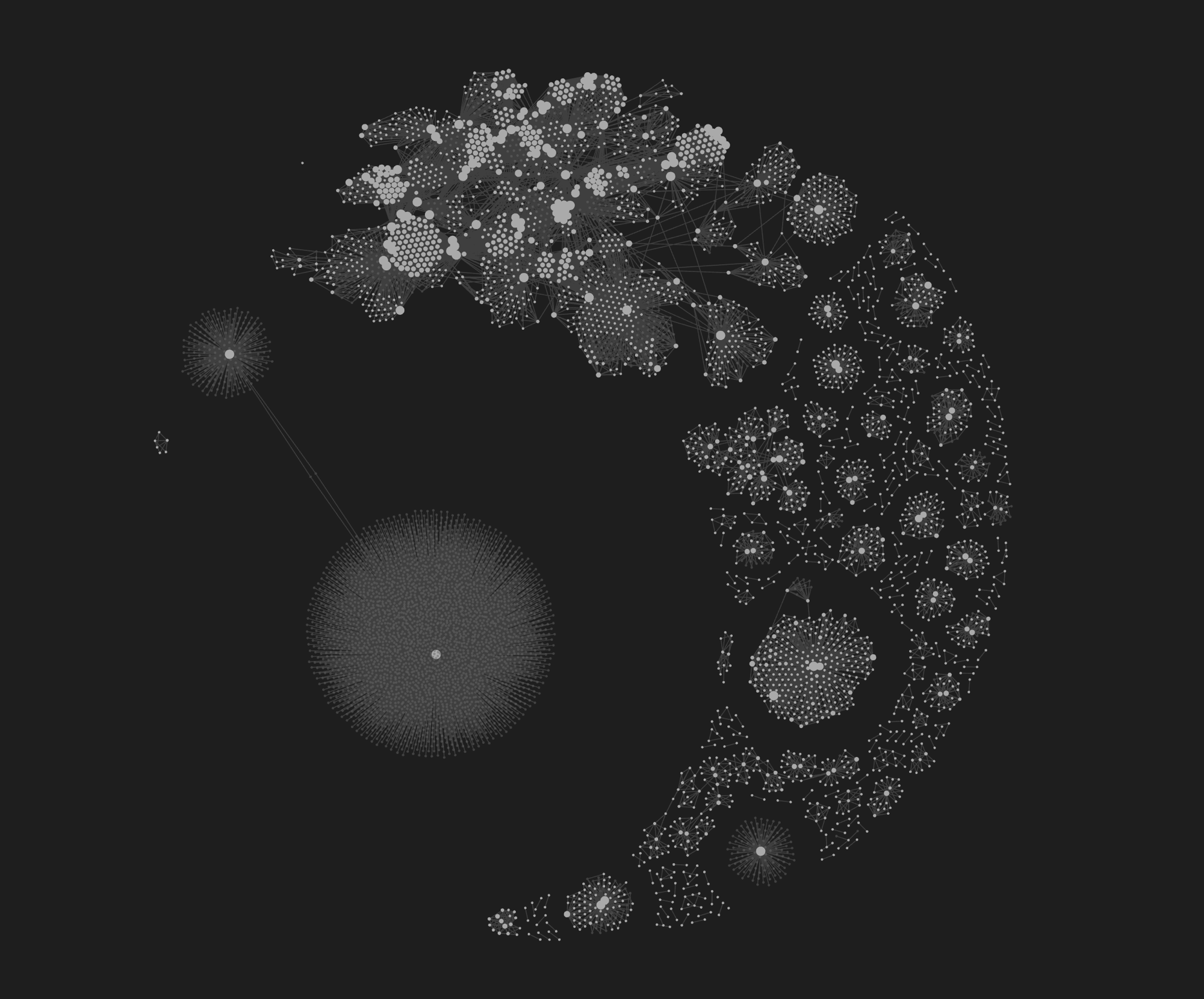

I have three knowledge graphs sitting on disk:

| Graph | Nodes | Covers |

|---|---|---|

| harness | 116 | ~/.claude/ — commands, hooks, agents, settings |

| claudeclaw | 217 | A daemon plugin I maintain |

| work-monorepo | 3,142 | A private work project — backend + frontend + shared libs |

Each graph is a JSON file with nodes (files, functions, classes, concepts) and edges (calls, imports, references, semantic links). They live in ~/.claude/graphify-out-*/graph.json.

The tool that builds them is graphify — a CLI that walks a directory and produces a graph via a four-stage pipeline:

Tree-sitter gives you ground-truth structural edges (imports, calls, inheritance). The LLM pass adds inferred semantic edges — the "this concept relates to that concept" links grep can never find. Louvain clustering (a standard community-detection algorithm) groups dense subgraphs into communities.

For the work monorepo (~3K files across three subprojects) the first build took ~15 minutes and a few dollars in LLM calls. Incremental updates after that re-extract only changed files — cheap.

How Claude Actually Queries It

The important trick isn't building the graph. It's making Claude reach for it before grepping. I added mandatory triggers to my global CLAUDE.md:

"Where is X in this project?" → graphify query "X" --budget 2000

"How does command /foo work?" → query the harness graph

Graphify exposes three query types:

- BFS around a concept — broad context, related nodes, which community they belong to

- Paths between two nodes — "does the document editor already talk to the encryption service?"

- Node explanations — blast radius of a function (callers, callees, centrality score)

A typical query returns 1,400–1,800 tokens of structured output. Claude then does 2–3 targeted Read calls to verify specific files before acting.

"Why Not Just an LSP?"

This is the first objection any compiler-brained reader will raise, and it's a fair one. Language servers, tree-sitter indexes, ctags, and pyright have solved incremental structural lookup since 2016. Why build a graph?

Three reasons, in order of importance:

- Cross-language and cross-artefact edges. An LSP lives inside one language. The semantic pass finds edges like "this TypeScript union shadows a Python enum in the schema file" or "this UI screenshot is referenced from a backend docstring." These are real bugs I've shipped. No LSP will ever flag them.

- Community structure, not just symbols. Louvain clustering surfaces boundaries — which 340 nodes form the "document editor" subsystem and which sit on its edges. Pyright tells you where

DocumentsServiceis defined. The graph tells you what subsystem it belongs to and what else belongs with it. - Natural-language queries, not symbol lookups.

graphify query "document encryption"works without knowing the class name. An LSP needs the exact symbol.

Where LSPs beat the graph: precision, latency, and zero setup. If all you need is "go to definition" on a single file, pyright wins. The graph is for the layer above that — architectural queries, not symbolic ones. Use both.

Making It Auto-Adaptive and Self-Healing

A static graph decays the moment you merge a PR. The whole thing falls apart if you have to remember to rebuild it. So I wired in a pipeline that keeps it current without me thinking about it:

- SessionStart hook tracks drift. When Claude Code starts, a hook measures sessions and edit-minutes since the last ingest. Over threshold → it prints a reminder to run

/kg-evolve. No cron, no daemon. /kg-evolveruns three stages: Ingest the staging inbox (files touched since last run). Quality checks consistency — orphans, broken edges, drifted communities. Heal re-embeds stale nodes, prunes dead edges, re-runs the semantic pass where density dropped.- Everything is reversible. Every evolve run snapshots the previous graph. If heal makes things worse,

/kg-rollbackrestores the last good version. Without reversibility I'd never feel safe running an LLM-mutation pass on live graph state.

This is the actual unlock. I've watched many people try static indexes for AI agents; they all get abandoned by week two because maintenance is annoying. Self-healing is the moat — and the ingest → quality → heal → rollback pattern generalizes to any AI memory system (RAG stores, agent scratchpads, embedding indexes all have the same drift problem).

The Numbers, Actually Measured

This is where I have to be careful, because blog posts claiming big percentage wins on small samples get torn apart in the comments — fairly.

Methodology. I ran 9 real queries across 3 graphs. For each, I measured:

- Graph cost:

graphify query <term>output tokenised withtiktoken(GPT-4 encoding). - Baseline cost: tokens of the top 5 files returned by

grep -rli <pattern>on the corresponding extensions — the files Claude would open if it fell back to grep.

The 5-file cap is deliberate: it's roughly what Claude reads before committing to an answer on a typical question. A bigger cap would inflate savings; a smaller one would deflate them. I picked what matched my observed sessions.

| Query | Graph | Graph tokens | Baseline (top 5) | Savings |

|---|---|---|---|---|

heartbeat daemon | claudeclaw | 1,767 | 12,220 | 85.5% |

telegram integration | claudeclaw | 1,804 | 21,144 | 91.5% |

cron job scheduler | claudeclaw | 1,450 | 16,683 | 91.3% |

status line display | claudeclaw | 1,473 | 12,968 | 88.6% |

authentication flow | work-monorepo | 1,717 | 484,052 | 99.6% |

document encryption | work-monorepo | 1,653 | 2,846 | 41.9% |

pdf generation | work-monorepo | 1,662 | 6,728 | 75.3% |

case management model | work-monorepo | 1,749 | 3,691 | 52.6% |

ai agent approval flow | work-monorepo | 1,724 | 7,905 | 78.2% |

Distribution:

- N = 9

- Median savings: 85.5%

- Mean: 78.3% (stdev 19.2)

- 25th percentile: 64.0% — worst quartile still saves ~2/3

- Range: 41.9% → 99.6%

Where the savings collapse

Two queries landed under 60%, and they're worth staring at:

document encryption— 41.9%. Why? The baseline was tiny (2,846 tokens across 5 small files), and the graph query's fixed ~1.6K overhead ate most of the win. Graphs lose on focused questions over small subsystems. Grep is already optimal there.case management model— 52.6%. Same reason: the model file is compact, and reading it directly is close to free.

The graph shines when the question's answer lives in a big, scattered, cross-cutting subsystem. It's marginal when the answer lives in one small file.

Where the savings are suspicious

authentication flow— 99.6%. The baseline here is 484K tokens because grep matched several very large files. In reality, Claude wouldn't read all 484K — it would hit context limits and start summarising. So "99.6%" here really means "the graph answered a question the grep baseline couldn't even fit in context." That's a real win, but it's a different win from the others; I wouldn't average it into a clean percentage without flagging it.

How this compares to published benchmarks

- Aider's repo map uses tree-sitter + PageRank to compress a whole codebase into a ~1K-token symbol summary on every turn. Same core primitive (structural graph + ranking), different delivery model.

- A DEV.to writeup reports a 99.2% reduction (121×) on 372 questions across 31 languages.

- A Claude Code-specific post reports 71.5× fewer tokens per query vs. re-pasting raw context.

Those numbers are fatter than mine because they picked worst-case baselines ("what if Claude reads every matched file in the repo"). My median of 85% on a top-5 baseline is the conservative read of the same phenomenon — the floor, not the ceiling.

If you want to reproduce: the tiktoken measurement script is ~100 lines of Python — reply to this post or DM me and I'll send it. Point it at your graphify graphs, run it. Numbers are numbers.

The Pros (That Survive the Methodology Audit)

- Cross-layer edges grep can't find. The semantic pass caught a TypeScript union shadowing a Python enum across the schema boundary. That's not a speed win — that's a class of bug grep will never surface.

- Community structure as architectural map. The work-monorepo graph clustered ~340 nodes into a coherent "document editor" community. Knowing the boundary before I refactor is the kind of thing that prevents accidental cross-subsystem coupling.

- Confidence tags on every edge. Each edge is EXTRACTED (tree-sitter, ground truth), INFERRED (LLM pass, probably right), or AMBIGUOUS (verify). I know when to trust the graph and when to double-check.

- Self-healing is the real moat. Without

/kg-evolve, this would have been abandoned by week two. With it, the graph is never more than a session behind reality.

The Cons (That Also Survive the Audit)

- Graphs lose on small focused subsystems. The 42% and 53% queries above. If your question fits in one file, grep is already optimal.

- Semantic edges hallucinate. INFERRED edges are an LLM's opinion. I've seen it invent relationships between modules that just share vocabulary. Read the confidence tags.

- Healing can go wrong. The heal stage is an LLM pass mutating graph state — occasionally it makes things worse. Snapshot + rollback is non-negotiable.

- Claude has to actually use the graph. Without mandatory triggers in

CLAUDE.md, the model defaults to grep every time. "ALWAYS consult the graph first" — softer than that gets ignored under pressure. - Upfront cost. First build: ~15 minutes and a few dollars. For a repo you visit once a quarter, not worth it.

Is It Worth It?

For a codebase I touch daily — yes, unambiguously. Median 85% savings pays for the build cost in a handful of sessions, and the cross-layer edges have caught real bugs I'd otherwise have shipped.

For a codebase I visit once a quarter — no. The build cost and drift tax outweigh the wins.

The deeper thing, and the reason I wrote this up: the bottleneck on AI coding isn't reasoning, it's context. Every session starts cold, and every cold start burns tokens rediscovering things the model already "knew" last time. Persistent, self-healing structural memory is one answer to that — and we're going to see a lot more of it over the next year, because it's the ANALYZE the field has been missing.

Related Posts

I Built a CLAUDE.md Linter in One Session. Here's What I Found in 773 Sessions of Context Files.

Every AI coding tool reads .md files for context. I built a Rust linter to grade them. The finding: most of what we write in CLAUDE.md never changes Claude's behavior. Here's the data.

My Claude Code Setup: 7 MCP Servers, Custom Hooks, and an AI That Tweets For Me

How I turned Claude Code into a full operating system -- with 7 MCP servers, security hooks, and custom skills that let AI operate my entire dev stack and social media.

KAIROS: A Complete Technical Teardown of Claude Code's Hidden Autonomous Daemon

234 references across 65 files. A 4-phase dream engine. Channel messaging via Discord and Telegram. GitHub webhook subscriptions. I read every line of the KAIROS system in Claude Code's leaked source. Here's how it actually works.